Real Lessons from Scaling SOC Operations With AI

NTT Data cybersecurity leaders share 12 practical strategies for integrating AI into the SOC to reduce incident effort by up to 70%.

- By Sheetal Mehta, Karthikeyan Veerappan

- Apr 22, 2026

NTT Data has been delivering cybersecurity services to enterprises globally for over 30 years and currently offers a Unified Detection and Response service operating from global and regional SOCs. In our SOC, we’ve driven efficiency with automation, continually tuned SIEM and detection rules and onboarded waves of new analysts.

It has not been enough, however, to keep up with the growth in alert volumes and in our business.

About a year ago, we decided to introduce AI to help scale our SOC operations and deliver on our vision of Proactive Cyber Defense. We selected Simbian as our AI SOC vendor after a false start with another vendor. Today we are in production and seeing strong results, including a 50-70% reduction in effort per incident and a >50% improvement in our time to respond.

AI is not eliminating roles, but it is helping us grow capacity without needing to add headcount at the same pace as we grow.

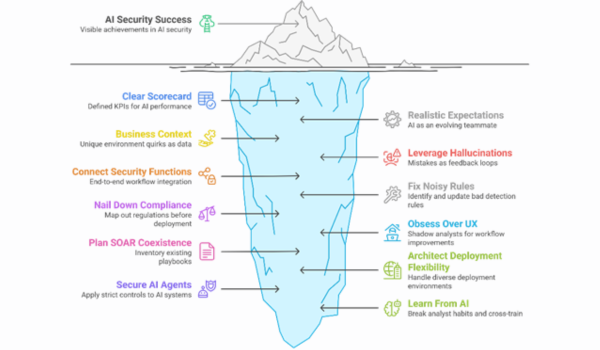

We learned many things during this project. Here is our summary of what worked, what didn’t, and the practical steps we recommend.

NTT Data’s lessons learned implementing AI in our SOC

NTT Data’s lessons learned implementing AI in our SOC

We set up and continue to use a clear set of KPIs to track and report our progress. For us, this means:

- Incident Type Coverage – we want AI SOC to be able to process > 90% of the alerts received by the SOC. We have achieved this for IT alerts and are now looking to expand to other classes of alerts.

- Automatically Close False Positives – we want AI SOC to correctly identify and automatically close 90% of false positives. We are on track to achieve this metric.

- Time-To-Respond – we want AI SOC to reduce how long it takes to respond to an alert by at least 50%. This has been achieved.

- Recommendations – we want at least 90% of the AI SOC recommendations to be deemed as “correct” by qualified reviewers. We are still working out how best to measure this.

This scorecard kept everyone grounded and the project focused. Your organization may value different outcomes but document those outcomes and get buy-in at the start of the project.

One of the most important lessons we learned was that if you don’t frame AI correctly from the start, people fill the gaps with unrealistic expectations. We told our teams that AI wouldn’t be perfect, especially early on, and that its real strength would be improving week by week as it learned from our environment.

That transparency helped everyone stay focused on progress, not perfection, and prevented frustration when the model produced a questionable result. AI becomes much easier to adopt when you anchor it as a powerful but evolving teammate that needs a supervisor, not as a silver bullet.

An early design choice that paid off was to treat customer context as an essential dataset rather than an afterthought. AI can learn a lot from telemetry and tickets, but it has no way of knowing your business exceptions or the unusual-but-approved behaviors that only humans recognize. AI performs only as well as the context you feed it.

Business exceptions, asset criticality, known benign behaviors and unique operational quirks all need to be explicitly documented and provided to the platform. Once we created a structured process for capturing and maintaining that context, the AI’s accuracy and decision quality jumped. The lesson: Assume the AI knows nothing about your environment unless you tell it, and then create a structured process to gather that knowledge from your team.

Early in our journey, AI generated confident but incorrect reasoning or chose an odd investigative direction. Instead of treating these as failures, we used them as learning opportunities, reviewing the cases, tightening guardrails and improving context. With consistent feedback, hallucination rates dropped significantly. The important thing is not to fear them; they’re part of the model’s learning cycle, and you can shape and shorten that cycle with good review processes.

While our core problem was scale, we also wanted to enable connections across security functions. For us, the real value shows up when incident management plugs into the rest of cyberthreat intel contextualized to assets, exposure data tied to cases and clear risk verdicts at the user or system level.

That’s the backbone of what we call Proactive Cyber Defense. It was important to us that AI be anchored to that end-to-end flow, not just triage. As you rethink processes and workflows around AI, also think about how you can connect those workflows across tools and silos.

Most SOCs don’t have time to fix detection rules before rolling out AI. We didn’t either. Instead, we leveraged the AI’s ability to highlight noisy detections by tracking which alerts were most often closed as false positives. That visibility drives a monthly process where engineering updates or retires problematic rules. Over time, this has drastically improved signal quality.

Use ongoing AI operations to surface the gaps, then use structured review cycles to close them. It’s one of the highest-leverage workflows you can build.

Compliance questions came early and often. Customers wanted to know how data residency worked, whether the model complied with GDPR, UAE or AU regulations, how decisions were audited and what controls governed AI’s behavior. We made sure to get our multitenant security and compliance controls right from the start—role-based access, sovereignty rules and region-specific controls.

That avoided rework and kept customers comfortable. We then built a standardized compliance packet. Having that material sped up onboarding and reduced friction. If you serve business units in other geographies or are a service provider supporting enterprise customers, assume compliance will be an early concern and be ready to respond before being asked.

As is often the case with new technologies, the core capabilities of AI SOC are strong and getting stronger, while the user experience is still catching up. We shadowed analysts during investigations to watch how they used the tool, and identified examples of extra clicks, hard-to-find context and confusing workflows. Fixing these issues required close collaboration with the vendor, but paid huge dividends. UX is not “nice to have.” If it’s clunky, analysts will quietly avoid the new tool.

Many of our customers have made significant investments in their SOAR environments. When we introduced AI-driven workflows, they wanted to know how both systems would operate side by side. We built a coexistence strategy of inventorying existing playbooks, deciding which tasks stayed automated by SOAR, determining where AI logic added value and planning gradual migration.

If you or your customers use SOAR, assume coexistence before convergence.

Our customer mix meant we needed SaaS for some clients and on-prem for others. Regulatory and sovereignty requirements drove these decisions. We also needed the platform to run as a multitenant for our MSSP operations and spin up as a dedicated instance for single-tenant customers. Having a platform flexible enough to support all of these variations saved us major rework. If your SOC serves multiple sectors, regions or business units, assume you will need every deployment model and architect accordingly.

As we applied AI to security, we also needed to recognize that AI systems themselves would become targets. We started treating AI like any other sensitive system—logging, monitoring, governance and strong identity controls. Especially in agentic environments where AI takes actions, you need clarity around attribution and approval. Start building this discipline early.

One surprising benefit was how much analysts improved by watching the AI work. When analysts see an alert that “looks like the last one,” they almost always apply the same approach they used before. AI investigates each incident from scratch instead of assuming patterns will repeat, which means it often took paths analysts wouldn't have considered.

We began reviewing these cases in team discussions, breaking down what the AI did differently. This helped analysts expand their own investigative approaches while also improving AI quality through better feedback.

If you are a security operations leader in a large enterprise, you’re already balancing more alerts, more compliance pressure, more stakeholders and more complexity than ever. AI won’t replace your SOC. But used well, it will buy back hours, expose inefficiencies and scale up your team’s capacity. Start with clear expectations, a scorecard and a plan for context and coexistence.

Do that, and you’ll see meaningful improvements in weeks—not quarters.